import pandas as pd18 Module 3 - Day 1

Topics

- Operating on tabular data using pandas

- Series, Data frame

- Access patterns , selecting

- Filtering

- Merging, joining, concatenating

- Groupby

- Reading/writing datasets using pandas

For today’s practice make use of notebook module3-day1.ipynb created in your enviroment. Shut down kernel for all previous notebooks (if in runing condition) by right cliking on notbeook on left hand side file browser

18.0.1 Pandas

pip install pandas

df = pd.read_csv("indexdata.csv")df| symbol | price | change | volume | |

|---|---|---|---|---|

| 0 | XFNBI | 154.275220 | 2.871246 | 3926 |

| 1 | ORGHT | 117.976702 | 4.539536 | 586 |

| 2 | VEBHV | 113.352080 | 0.663598 | 3688 |

| 3 | BYRIK | 104.213829 | 2.626015 | 1767 |

| 4 | VCRRD | 155.431605 | 0.468172 | 641 |

| 5 | PEQVY | 181.216668 | 0.427297 | 2738 |

| 6 | LQTQZ | 168.335733 | 3.521912 | 2670 |

| 7 | HPDAK | 155.960084 | 1.723922 | 4259 |

| 8 | XLHUI | 174.469648 | 3.745171 | 2770 |

| 9 | JEAZX | 148.716293 | 2.662496 | 1614 |

| 10 | QOZAM | 192.117607 | 4.139242 | 726 |

| 11 | XJAHU | 150.204350 | 3.106526 | 2688 |

| 12 | VLXDC | 178.734866 | 3.867853 | 4103 |

| 13 | PJQEK | 199.046119 | 3.209686 | 1592 |

| 14 | DWJDV | 148.753405 | 3.331682 | 2177 |

| 15 | ORJMD | 174.117528 | 3.180221 | 909 |

| 16 | DLJGR | 117.330438 | 0.346862 | 4092 |

| 17 | YCHHM | 144.874681 | 3.353055 | 1571 |

| 18 | WFNNB | 147.653321 | 3.776028 | 3195 |

| 19 | YNRZG | 128.869577 | 4.569919 | 2713 |

pd.read_csv("stocks.csv")| Symbol | Price | Date | Time | Change | Volume | |

|---|---|---|---|---|---|---|

| 0 | AA | 39.48 | 6/11/2007 | 9:36am | -0.18 | 181800 |

| 1 | AIG | 71.38 | 6/11/2007 | 9:36am | -0.15 | 195500 |

| 2 | AXP | 62.58 | 6/11/2007 | 9:36am | -0.46 | 935000 |

| 3 | BA | 98.31 | 6/11/2007 | 9:36am | 0.12 | 104800 |

| 4 | C | 53.08 | 6/11/2007 | 9:36am | -0.25 | 360900 |

| 5 | CAT | 78.29 | 6/11/2007 | 9:36am | -0.23 | 225400 |

url = "https://raw.githubusercontent.com/vikipedia/python-trainings/master/online_course/source/module2/wallet.csv"

wallet = pd.read_csv(url)wallet| Unnamed: 0 | date | category | description | debit | |

|---|---|---|---|---|---|

| 0 | 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 |

| 1 | 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 |

| 2 | 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 |

| 3 | 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 |

| 4 | 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 |

| ... | ... | ... | ... | ... | ... |

| 95 | 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 |

| 96 | 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 |

| 97 | 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 |

| 98 | 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 |

| 99 | 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 |

100 rows × 5 columns

exelurl = "https://github.com/vikipedia/python-trainings/raw/master/online_course/source/module2/wallet.xlsx"

wallet = pd.read_csv(exelurl)--------------------------------------------------------------------------- UnicodeDecodeError Traceback (most recent call last) Cell In[7], line 2 1 exelurl = "https://github.com/vikipedia/python-trainings/raw/master/online_course/source/module2/wallet.xlsx" ----> 2 wallet = pd.read_csv(exelurl) File /opt/tljh/user/lib/python3.12/site-packages/pandas/io/parsers/readers.py:1026, in read_csv(filepath_or_buffer, sep, delimiter, header, names, index_col, usecols, dtype, engine, converters, true_values, false_values, skipinitialspace, skiprows, skipfooter, nrows, na_values, keep_default_na, na_filter, verbose, skip_blank_lines, parse_dates, infer_datetime_format, keep_date_col, date_parser, date_format, dayfirst, cache_dates, iterator, chunksize, compression, thousands, decimal, lineterminator, quotechar, quoting, doublequote, escapechar, comment, encoding, encoding_errors, dialect, on_bad_lines, delim_whitespace, low_memory, memory_map, float_precision, storage_options, dtype_backend) 1013 kwds_defaults = _refine_defaults_read( 1014 dialect, 1015 delimiter, (...) 1022 dtype_backend=dtype_backend, 1023 ) 1024 kwds.update(kwds_defaults) -> 1026 return _read(filepath_or_buffer, kwds) File /opt/tljh/user/lib/python3.12/site-packages/pandas/io/parsers/readers.py:620, in _read(filepath_or_buffer, kwds) 617 _validate_names(kwds.get("names", None)) 619 # Create the parser. --> 620 parser = TextFileReader(filepath_or_buffer, **kwds) 622 if chunksize or iterator: 623 return parser File /opt/tljh/user/lib/python3.12/site-packages/pandas/io/parsers/readers.py:1620, in TextFileReader.__init__(self, f, engine, **kwds) 1617 self.options["has_index_names"] = kwds["has_index_names"] 1619 self.handles: IOHandles | None = None -> 1620 self._engine = self._make_engine(f, self.engine) File /opt/tljh/user/lib/python3.12/site-packages/pandas/io/parsers/readers.py:1898, in TextFileReader._make_engine(self, f, engine) 1895 raise ValueError(msg) 1897 try: -> 1898 return mapping[engine](f, **self.options) 1899 except Exception: 1900 if self.handles is not None: File /opt/tljh/user/lib/python3.12/site-packages/pandas/io/parsers/c_parser_wrapper.py:93, in CParserWrapper.__init__(self, src, **kwds) 90 if kwds["dtype_backend"] == "pyarrow": 91 # Fail here loudly instead of in cython after reading 92 import_optional_dependency("pyarrow") ---> 93 self._reader = parsers.TextReader(src, **kwds) 95 self.unnamed_cols = self._reader.unnamed_cols 97 # error: Cannot determine type of 'names' File pandas/_libs/parsers.pyx:574, in pandas._libs.parsers.TextReader.__cinit__() File pandas/_libs/parsers.pyx:663, in pandas._libs.parsers.TextReader._get_header() File pandas/_libs/parsers.pyx:874, in pandas._libs.parsers.TextReader._tokenize_rows() File pandas/_libs/parsers.pyx:891, in pandas._libs.parsers.TextReader._check_tokenize_status() File pandas/_libs/parsers.pyx:2053, in pandas._libs.parsers.raise_parser_error() File <frozen codecs>:322, in decode(self, input, final) UnicodeDecodeError: 'utf-8' codec can't decode byte 0xbd in position 56: invalid start byte

exelurl = "https://github.com/vikipedia/python-trainings/raw/master/online_course/source/module2/wallet.xlsx"

wallet = pd.read_excel(exelurl)wallet| Unnamed: 0 | date | category | description | debit | |

|---|---|---|---|---|---|

| 0 | 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 |

| 1 | 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 |

| 2 | 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 |

| 3 | 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 |

| 4 | 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 |

| ... | ... | ... | ... | ... | ... |

| 95 | 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 |

| 96 | 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 |

| 97 | 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 |

| 98 | 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 |

| 99 | 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 |

100 rows × 5 columns

moneycontrol = "https://www.moneycontrol.com/markets/indian-indices"

dfs = pd.read_html(moneycontrol) # it will give list of datframestype(dfs)listlen(dfs)6dfs[0]| Stock Name | Sector | LTP | Change | %Chg | |

|---|---|---|---|---|---|

| 0 | No Data | No Data | No Data | No Data | No Data |

dfs[1]| Stock Name | Sector | LTP | Change | %Chg | |

|---|---|---|---|---|---|

| 0 | No Data | No Data | No Data | No Data | No Data |

dfs[2]| Stock Name | Sector | LTP | Change | %Chg | |

|---|---|---|---|---|---|

| 0 | Infosys | Computers - Software | 1344.00 | -25.10 | -1.83 |

| 1 | Tech Mahindra | Computers - Software | 1515.00 | -19.40 | -1.26 |

| 2 | Maruti Suzuki | Auto - Cars & Jeeps | 15045.00 | -192.00 | -1.26 |

| 3 | Jio Financial | Finance - Others | 261.30 | -2.65 | -1.00 |

| 4 | Hindalco | Iron & Steel | 900.10 | -8.90 | -0.98 |

| 5 | Bajaj Auto | Auto - 2 & 3 Wheelers | 9670.00 | -90.00 | -0.92 |

| 6 | TMPV | Auto - LCVs & HCVs | 377.05 | -3.20 | -0.84 |

| 7 | Wipro | Computers - Software | 212.40 | -1.69 | -0.79 |

| 8 | SBI | Banks - Public Sector | 1189.20 | -9.40 | -0.78 |

| 9 | M&M | Auto - Cars & Jeeps | 3512.00 | -21.40 | -0.61 |

| 10 | UltraTechCement | Cement - Major | 12892.00 | -71.00 | -0.55 |

| 11 | Adani Ports | Infrastructure - General | 1510.80 | -8.20 | -0.54 |

| 12 | Bharat Elec | Aerospace & Defence | 433.35 | -2.20 | -0.51 |

| 13 | ICICI Bank | Banks - Private Sector | 1407.50 | -7.10 | -0.50 |

| 14 | Larsen | Infrastructure - General | 4160.70 | -13.20 | -0.32 |

| 15 | Eicher Motors | Auto - LCVs & HCVs | 8044.50 | -20.50 | -0.25 |

| 16 | Bajaj Finance | Finance - NBFC | 1022.55 | -2.20 | -0.21 |

| 17 | Interglobe Avi | Transport & Logistics | 4921.00 | -8.20 | -0.17 |

| 18 | Dr Reddys Labs | Pharmaceuticals | 1266.00 | -2.10 | -0.17 |

| 19 | TCS | Computers - Software | 2688.20 | -4.00 | -0.15 |

| 20 | Tata Steel | Iron & Steel | 202.89 | -0.29 | -0.14 |

| 21 | Apollo Hospital | Hospitals & Medical Services | 7532.00 | -10.50 | -0.14 |

| 22 | Bharti Airtel | Telecommunications - Service | 2003.10 | -1.60 | -0.08 |

| 23 | HCL Tech | Computers - Software | 1454.20 | -1.00 | -0.07 |

dfs[3]| Stock Name | Sector | LTP | Change | %Chg | |

|---|---|---|---|---|---|

| 0 | Coal India | Mining & Minerals | 419.35 | 10.40 | 2.54 |

| 1 | HDFC Bank | Banks - Private Sector | 921.90 | 18.00 | 1.99 |

| 2 | ONGC | Oil Drilling And Exploration | 270.95 | 3.55 | 1.33 |

| 3 | NTPC | Power - Generation & Distribution | 367.75 | 4.75 | 1.31 |

| 4 | Eternal | Online Services | 288.90 | 3.70 | 1.30 |

| 5 | Axis Bank | Banks - Private Sector | 1349.00 | 16.70 | 1.25 |

| 6 | Bajaj Finserv | Finance - Investments | 2042.30 | 19.10 | 0.94 |

| 7 | Cipla | Pharmaceuticals | 1343.40 | 11.90 | 0.89 |

| 8 | Max Healthcare | Hospital & Healthcare Services | 1062.40 | 8.80 | 0.84 |

| 9 | ITC | Diversified | 316.35 | 2.60 | 0.83 |

| 10 | HDFC Life | Life & Health Insurance | 701.35 | 4.55 | 0.65 |

| 11 | Shriram Finance | Finance - Leasing & Hire Purchase | 1071.50 | 5.70 | 0.53 |

| 12 | Nestle | Food Processing | 1288.20 | 5.60 | 0.44 |

| 13 | TATA Cons. Prod | Plantations - Tea & Coffee | 1135.60 | 3.40 | 0.30 |

| 14 | Asian Paints | Paints & Varnishes | 2373.50 | 7.10 | 0.30 |

| 15 | SBI Life Insura | Life & Health Insurance | 2038.30 | 4.10 | 0.20 |

| 16 | JSW Steel | Steel - Large | 1234.40 | 2.40 | 0.19 |

| 17 | Trent | Retail | 4259.70 | 7.70 | 0.18 |

| 18 | Kotak Mahindra | Banks - Private Sector | 421.35 | 0.70 | 0.17 |

| 19 | Grasim | Diversified | 2892.90 | 4.90 | 0.17 |

| 20 | Sun Pharma | Pharmaceuticals | 1700.00 | 2.50 | 0.15 |

| 21 | Titan Company | Miscellaneous | 4185.00 | 5.80 | 0.14 |

| 22 | Reliance | Refineries | 1421.00 | 1.40 | 0.10 |

| 23 | HUL | Personal Care | 2306.50 | 1.30 | 0.06 |

| 24 | Adani Enterpris | Trading | 2136.70 | 0.10 | 0.00 |

dfs[4]| Stock Name | Sector | LTP | Change | %Chg | |

|---|---|---|---|---|---|

| 0 | Power Grid Corp | Power - Generation & Distribution | 297.25 | 10.05 | 3.5 |

dfs[5]| Stock Name | Sector | LTP | Change | %Chg | |

|---|---|---|---|---|---|

| 0 | No Data | No Data | No Data | No Data | No Data |

import time

time.sleep(10)18.0.2 DataFrame

wallet| Unnamed: 0 | date | category | description | debit | |

|---|---|---|---|---|---|

| 0 | 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 |

| 1 | 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 |

| 2 | 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 |

| 3 | 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 |

| 4 | 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 |

| ... | ... | ... | ... | ... | ... |

| 95 | 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 |

| 96 | 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 |

| 97 | 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 |

| 98 | 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 |

| 99 | 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 |

100 rows × 5 columns

type(wallet)pandas.core.frame.DataFramewallet['date'] # like dictioanry ..column names can be used as keys0 2021-03-07 14:53:28.377359

1 2020-10-08 09:53:28.377359

2 2021-02-23 09:53:28.377359

3 2020-11-01 14:53:28.377359

4 2021-06-05 13:53:28.377359

...

95 2021-07-19 13:53:28.377359

96 2021-01-12 19:53:28.377359

97 2021-03-25 11:53:28.377359

98 2021-05-13 15:53:28.377359

99 2020-10-11 16:53:28.377359

Name: date, Length: 100, dtype: objectwallet['debit']0 421.207327

1 328.440080

2 244.679437

3 222.756318

4 494.128492

...

95 388.671213

96 467.554562

97 320.789434

98 442.096469

99 100.455501

Name: debit, Length: 100, dtype: float64wallet['debit'].sum()np.float64(31059.590543177284)wallet['debit'].std()np.float64(121.17821796983652)wallet['debit'].mean()np.float64(310.59590543177285)sum([f for f in wallet['debit']])31059.59054317728wallet.debit # access like attribute0 421.207327

1 328.440080

2 244.679437

3 222.756318

4 494.128492

...

95 388.671213

96 467.554562

97 320.789434

98 442.096469

99 100.455501

Name: debit, Length: 100, dtype: float64wallet['Unnamed: 0']0 0

1 1

2 2

3 3

4 4

..

95 95

96 96

97 97

98 98

99 99

Name: Unnamed: 0, Length: 100, dtype: int64type(wallet.debit)pandas.core.series.Seriess = pd.Series([1, 2, 3, 4, 5, 5])s0 1

1 2

2 3

3 4

4 5

5 5

dtype: int64stocks = pd.Series([421, 328, 123, 234], index=["APPLE","AT&T","IBM","NIKE"])stocksAPPLE 421

AT&T 328

IBM 123

NIKE 234

dtype: int64stocks['APPLE'] # use index label like a key ..it will give values at that indexnp.int64(421)stocks['IBM']np.int64(123)pd.Series([421, 328, 123, 234])0 421

1 328

2 123

3 234

dtype: int64stocks['APPLE']np.int64(421)stocks[0]/tmp/ipykernel_68398/1593074069.py:1: FutureWarning: Series.__getitem__ treating keys as positions is deprecated. In a future version, integer keys will always be treated as labels (consistent with DataFrame behavior). To access a value by position, use `ser.iloc[pos]`

stocks[0]np.int64(421)s[0]np.int64(1)s0 1

1 2

2 3

3 4

4 5

5 5

dtype: int64Dataframe is collection of Series with same index

labels = ["APPLE","AT&T","IBM","NIKE"]

value = pd.Series([234.5, 221.6, 125.7, 100.5], index=labels)

low = pd.Series([233.0, 220.0, 123.0, 104.0], index=labels)

high = pd.Series([240.32, 222.5, 127.3, 105.0], index=labels)

volume = pd.Series([100, 200, 50, 1000], index=labels)valueAPPLE 234.5

AT&T 221.6

IBM 125.7

NIKE 100.5

dtype: float64lowAPPLE 233.0

AT&T 220.0

IBM 123.0

NIKE 104.0

dtype: float64pd.DataFrame({"value":value, "low": low, "high": high,"volume": volume})| value | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

| NIKE | 100.5 | 104.0 | 105.00 | 1000 |

stocks = pd.DataFrame({"value":value, "low": low, "high": high,"volume": volume})stocks| value | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

| NIKE | 100.5 | 104.0 | 105.00 | 1000 |

pd.DataFrame({ "value" : [234.5, 221.6, 125.7, 100.5],

"high" : [240.32, 222.5, 127.3, 105.0],

"low" : [233.0, 220.0, 123.0, 104.0],

"volume" : [100, 200, 50, 1000]},

index=labels)| value | high | low | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 240.32 | 233.0 | 100 |

| AT&T | 221.6 | 222.50 | 220.0 | 200 |

| IBM | 125.7 | 127.30 | 123.0 | 50 |

| NIKE | 100.5 | 105.00 | 104.0 | 1000 |

stocks.valueAPPLE 234.5

AT&T 221.6

IBM 125.7

NIKE 100.5

Name: value, dtype: float64stocks.volumeAPPLE 100

AT&T 200

IBM 50

NIKE 1000

Name: volume, dtype: int6418.0.2.1 How to access rows?

stocks.loc['APPLE']value 234.50

low 233.00

high 240.32

volume 100.00

Name: APPLE, dtype: float64stocks| value | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

| NIKE | 100.5 | 104.0 | 105.00 | 1000 |

stocks.loc['NIKE']value 100.5

low 104.0

high 105.0

volume 1000.0

Name: NIKE, dtype: float64[i for i in stocks.loc['NIKE']][100.5, 104.0, 105.0, 1000.0]18.0.2.2 Accessing multiple items

stocks['value'] # you will get a seriesAPPLE 234.5

AT&T 221.6

IBM 125.7

NIKE 100.5

Name: value, dtype: float64stocks[['value','volume']] # subset of columns| value | volume | |

|---|---|---|

| APPLE | 234.5 | 100 |

| AT&T | 221.6 | 200 |

| IBM | 125.7 | 50 |

| NIKE | 100.5 | 1000 |

stocks| value | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

| NIKE | 100.5 | 104.0 | 105.00 | 1000 |

d = stocks[['value', 'volume']]d| value | volume | |

|---|---|---|

| APPLE | 234.5 | 100 |

| AT&T | 221.6 | 200 |

| IBM | 125.7 | 50 |

| NIKE | 100.5 | 1000 |

stocks| value | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

| NIKE | 100.5 | 104.0 | 105.00 | 1000 |

d| value | volume | |

|---|---|---|

| APPLE | 234.5 | 100 |

| AT&T | 221.6 | 200 |

| IBM | 125.7 | 50 |

| NIKE | 100.5 | 1000 |

v = stocks['value']vAPPLE 234.5

AT&T 221.6

IBM 125.7

NIKE 100.5

Name: value, dtype: float64v[['APPLE','IBM']] # in series also you can give multiple lables to accessAPPLE 234.5

IBM 125.7

Name: value, dtype: float64v['APPLE']np.float64(234.5)stocks.iloc[0]value 234.50

low 233.00

high 240.32

volume 100.00

Name: APPLE, dtype: float64stocks| value | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

| NIKE | 100.5 | 104.0 | 105.00 | 1000 |

stocks.iloc[0]value 234.50

low 233.00

high 240.32

volume 100.00

Name: APPLE, dtype: float64stocks.iloc[[0,2]]| value | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

stocks.iloc[[0,2], [0, 1]] # subset of rows and columns| value | low | |

|---|---|---|

| APPLE | 234.5 | 233.0 |

| IBM | 125.7 | 123.0 |

18.0.3 Working with dataframe

wallet| Unnamed: 0 | date | category | description | debit | |

|---|---|---|---|---|---|

| 0 | 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 |

| 1 | 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 |

| 2 | 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 |

| 3 | 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 |

| 4 | 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 |

| ... | ... | ... | ... | ... | ... |

| 95 | 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 |

| 96 | 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 |

| 97 | 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 |

| 98 | 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 |

| 99 | 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 |

100 rows × 5 columns

wallet.head() # will first five lines of all the column| Unnamed: 0 | date | category | description | debit | |

|---|---|---|---|---|---|

| 0 | 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 |

| 1 | 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 |

| 2 | 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 |

| 3 | 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 |

| 4 | 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 |

wallet.tail() # this will show last five lines for all the columns| Unnamed: 0 | date | category | description | debit | |

|---|---|---|---|---|---|

| 95 | 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 |

| 96 | 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 |

| 97 | 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 |

| 98 | 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 |

| 99 | 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 |

head = wallet.head()head| Unnamed: 0 | date | category | description | debit | |

|---|---|---|---|---|---|

| 0 | 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 |

| 1 | 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 |

| 2 | 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 |

| 3 | 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 |

| 4 | 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 |

tail = wallet.tail()tail| Unnamed: 0 | date | category | description | debit | |

|---|---|---|---|---|---|

| 95 | 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 |

| 96 | 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 |

| 97 | 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 |

| 98 | 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 |

| 99 | 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 |

wallet| Unnamed: 0 | date | category | description | debit | |

|---|---|---|---|---|---|

| 0 | 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 |

| 1 | 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 |

| 2 | 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 |

| 3 | 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 |

| 4 | 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 |

| ... | ... | ... | ... | ... | ... |

| 95 | 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 |

| 96 | 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 |

| 97 | 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 |

| 98 | 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 |

| 99 | 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 |

100 rows × 5 columns

wallet.rename(columns={"Unnamed: 0":"srno"})| srno | date | category | description | debit | |

|---|---|---|---|---|---|

| 0 | 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 |

| 1 | 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 |

| 2 | 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 |

| 3 | 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 |

| 4 | 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 |

| ... | ... | ... | ... | ... | ... |

| 95 | 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 |

| 96 | 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 |

| 97 | 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 |

| 98 | 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 |

| 99 | 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 |

100 rows × 5 columns

wallet| Unnamed: 0 | date | category | description | debit | |

|---|---|---|---|---|---|

| 0 | 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 |

| 1 | 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 |

| 2 | 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 |

| 3 | 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 |

| 4 | 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 |

| ... | ... | ... | ... | ... | ... |

| 95 | 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 |

| 96 | 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 |

| 97 | 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 |

| 98 | 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 |

| 99 | 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 |

100 rows × 5 columns

w = wallet.rename(columns={"Unnamed: 0":"srno"})w| srno | date | category | description | debit | |

|---|---|---|---|---|---|

| 0 | 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 |

| 1 | 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 |

| 2 | 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 |

| 3 | 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 |

| 4 | 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 |

| ... | ... | ... | ... | ... | ... |

| 95 | 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 |

| 96 | 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 |

| 97 | 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 |

| 98 | 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 |

| 99 | 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 |

100 rows × 5 columns

del w['srno']w| date | category | description | debit | |

|---|---|---|---|---|

| 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 |

| 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 |

| 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 |

| 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 |

| 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 |

| ... | ... | ... | ... | ... |

| 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 |

| 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 |

| 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 |

| 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 |

| 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 |

100 rows × 4 columns

wallet['ones'] = [1]*100[1]*100 # it will create a list of length 100 wallet| Unnamed: 0 | date | category | description | debit | ones | |

|---|---|---|---|---|---|---|

| 0 | 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 | 1 |

| 1 | 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 | 1 |

| 2 | 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 | 1 |

| 3 | 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 | 1 |

| 4 | 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 | 1 |

| ... | ... | ... | ... | ... | ... | ... |

| 95 | 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 | 1 |

| 96 | 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 | 1 |

| 97 | 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 | 1 |

| 98 | 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 | 1 |

| 99 | 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 | 1 |

100 rows × 6 columns

wallet.sum()[['debit','ones']]debit 31059.590543

ones 100

dtype: objectstocks| value | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

| NIKE | 100.5 | 104.0 | 105.00 | 1000 |

stocks.rename(columns={'value': "VALUE"})| VALUE | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

| NIKE | 100.5 | 104.0 | 105.00 | 1000 |

stocks| value | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

| NIKE | 100.5 | 104.0 | 105.00 | 1000 |

stocks.rename(columns={'value': "VALUE"}, inplace=True)stocks| VALUE | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

| NIKE | 100.5 | 104.0 | 105.00 | 1000 |

18.0.4 Combining two dataframes

stocks.rename(columns={"VALUE":"value"}, inplace=True)stocks| value | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

| NIKE | 100.5 | 104.0 | 105.00 | 1000 |

stock1 = pd.DataFrame({

"value" : [125, 500.0, 300.4, 423.9],

"low" : [125.0, 490.0, 299.5, 421.1],

"high" : [130.0, 500.0, 305.0, 425.5],

"volume" : [123, 50, 100, 80]

},

index = ["BELL","XEROX","FORD","TESLA"]

)stock1| value | low | high | volume | |

|---|---|---|---|---|

| BELL | 125.0 | 125.0 | 130.0 | 123 |

| XEROX | 500.0 | 490.0 | 500.0 | 50 |

| FORD | 300.4 | 299.5 | 305.0 | 100 |

| TESLA | 423.9 | 421.1 | 425.5 | 80 |

pd.concat([stocks, stock1])| value | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

| NIKE | 100.5 | 104.0 | 105.00 | 1000 |

| BELL | 125.0 | 125.0 | 130.00 | 123 |

| XEROX | 500.0 | 490.0 | 500.00 | 50 |

| FORD | 300.4 | 299.5 | 305.00 | 100 |

| TESLA | 423.9 | 421.1 | 425.50 | 80 |

problem - Write a function combine_csvs to combine multiple csv files in to single one. Assume that all csv files have same columns but different data.

file = ["x1.csv", "x2.csv", "x3.csv"]

outputfile = "combined.csv"

combine_csvs(files, outputfile)stock1.to_csv("stock1.csv")!cat stock1.csv,value,low,high,volume

BELL,125.0,125.0,130.0,123

XEROX,500.0,490.0,500.0,50

FORD,300.4,299.5,305.0,100

TESLA,423.9,421.1,425.5,80%%file x1.csv

ticker,value,low,high,volume

BELL,125.0,125.0,130.0,123

XEROX,500.0,490.0,500.0,50

FORD,300.4,299.5,305.0,100

TESLA,423.9,421.1,425.5,80Writing x1.csv%%file x2.csv

ticker,value,low,high,volume

APPLE,234.5,233.0,240.32,100

AT&T,221.6,220.0,222.50,200

IBM,125.7,123.0,127.30,50

NIKE,100.5,104.0,105.00,1000Writing x2.csv%%file x3.csv

ticker,value,low,high,volume

T1,234.5,233.0,240.32,100

T2,221.6,220.0,222.50,200

T3,125.7,123.0,127.30,50

T4,100.5,104.0,105.00,1000Writing x3.csvimport pandas as pd

def combined_csvs(csvfiles, outputfile):

dfs = [pd.read_csv(f) for f in csvfiles]

df = pd.concat(dfs)

df.to_csv(outputfile)combined_csvs(["x1.csv", "x2.csv", "x3.csv"], "combined.csv")!cat combined.csv,ticker,value,low,high,volume

0,BELL,125.0,125.0,130.0,123

1,XEROX,500.0,490.0,500.0,50

2,FORD,300.4,299.5,305.0,100

3,TESLA,423.9,421.1,425.5,80

0,APPLE,234.5,233.0,240.32,100

1,AT&T,221.6,220.0,222.5,200

2,IBM,125.7,123.0,127.3,50

3,NIKE,100.5,104.0,105.0,1000

0,T1,234.5,233.0,240.32,100

1,T2,221.6,220.0,222.5,200

2,T3,125.7,123.0,127.3,50

3,T4,100.5,104.0,105.0,1000def combined_csvs(*args): # here having a * before agrument means is variable number of arguments

passdef mysum(*args):

s = 0

for i in args:

s += i

return smysum(2, 3)5mysum(1, 2, 3)6mysum(1, 2, 3, 4, 5, 6, 7)28def combined_csvs(outputfile, *csvfiles): # outputfile is fixed argument, csvfiles is varaible number of arguments

dfs = [pd.read_csv(f) for f in csvfiles]

df = pd.concat(dfs)

df.to_csv(outputfile)combined_csvs("X.csv", "x1.csv", "x2.csv")!cat X.csv,ticker,value,low,high,volume

0,BELL,125.0,125.0,130.0,123

1,XEROX,500.0,490.0,500.0,50

2,FORD,300.4,299.5,305.0,100

3,TESLA,423.9,421.1,425.5,80

0,APPLE,234.5,233.0,240.32,100

1,AT&T,221.6,220.0,222.5,200

2,IBM,125.7,123.0,127.3,50

3,NIKE,100.5,104.0,105.0,1000combined_csvs("X.csv", "x1.csv", "x2.csv", "x3.csv")!cat X.csv,ticker,value,low,high,volume

0,BELL,125.0,125.0,130.0,123

1,XEROX,500.0,490.0,500.0,50

2,FORD,300.4,299.5,305.0,100

3,TESLA,423.9,421.1,425.5,80

0,APPLE,234.5,233.0,240.32,100

1,AT&T,221.6,220.0,222.5,200

2,IBM,125.7,123.0,127.3,50

3,NIKE,100.5,104.0,105.0,1000

0,T1,234.5,233.0,240.32,100

1,T2,221.6,220.0,222.5,200

2,T3,125.7,123.0,127.3,50

3,T4,100.5,104.0,105.0,1000merging

df1 = pd.DataFrame(

{

"a":[1,2, 3, 4, 5],

"b":[34, 56, 76, 87, 9],

"labels": ["x","y","z","m","n"]

}

)df1| a | b | labels | |

|---|---|---|---|

| 0 | 1 | 34 | x |

| 1 | 2 | 56 | y |

| 2 | 3 | 76 | z |

| 3 | 4 | 87 | m |

| 4 | 5 | 9 | n |

df2 = pd.DataFrame(

{

"c":[1,2, 3, 4],

"d":[34, 56, 76, 87],

"labels":["x","y","z","m"]}

)df2| c | d | labels | |

|---|---|---|---|

| 0 | 1 | 34 | x |

| 1 | 2 | 56 | y |

| 2 | 3 | 76 | z |

| 3 | 4 | 87 | m |

pd.merge(df1, df2, on="labels")| a | b | labels | c | d | |

|---|---|---|---|---|---|

| 0 | 1 | 34 | x | 1 | 34 |

| 1 | 2 | 56 | y | 2 | 56 |

| 2 | 3 | 76 | z | 3 | 76 |

| 3 | 4 | 87 | m | 4 | 87 |

dfj1 = pd.DataFrame(

{

"a":[1,2, 3, 4, 5],

"b":[34, 56, 76, 87, 9]

},

index=["x","y","z","m","n"]

)dfj1| a | b | |

|---|---|---|

| x | 1 | 34 |

| y | 2 | 56 |

| z | 3 | 76 |

| m | 4 | 87 |

| n | 5 | 9 |

dfj2 = pd.DataFrame(

{

"c":[1,2, 3, 4],

"d":[34, 56, 76, 87],

},

index=["x","y","z","m"]

)dfj2| c | d | |

|---|---|---|

| x | 1 | 34 |

| y | 2 | 56 |

| z | 3 | 76 |

| m | 4 | 87 |

dfj1.join(dfj2)| a | b | c | d | |

|---|---|---|---|---|

| x | 1 | 34 | 1.0 | 34.0 |

| y | 2 | 56 | 2.0 | 56.0 |

| z | 3 | 76 | 3.0 | 76.0 |

| m | 4 | 87 | 4.0 | 87.0 |

| n | 5 | 9 | NaN | NaN |

18.0.4.1 index

df1| a | b | labels | |

|---|---|---|---|

| 0 | 1 | 34 | x |

| 1 | 2 | 56 | y |

| 2 | 3 | 76 | z |

| 3 | 4 | 87 | m |

| 4 | 5 | 9 | n |

d1 = df1.set_index("labels")d1| a | b | |

|---|---|---|

| labels | ||

| x | 1 | 34 |

| y | 2 | 56 |

| z | 3 | 76 |

| m | 4 | 87 |

| n | 5 | 9 |

d2 = df2.set_index("labels")d2| c | d | |

|---|---|---|

| labels | ||

| x | 1 | 34 |

| y | 2 | 56 |

| z | 3 | 76 |

| m | 4 | 87 |

d1.join(d2)| a | b | c | d | |

|---|---|---|---|---|

| labels | ||||

| x | 1 | 34 | 1.0 | 34.0 |

| y | 2 | 56 | 2.0 | 56.0 |

| z | 3 | 76 | 3.0 | 76.0 |

| m | 4 | 87 | 4.0 | 87.0 |

| n | 5 | 9 | NaN | NaN |

df1| a | b | labels | |

|---|---|---|---|

| 0 | 1 | 34 | x |

| 1 | 2 | 56 | y |

| 2 | 3 | 76 | z |

| 3 | 4 | 87 | m |

| 4 | 5 | 9 | n |

d1| a | b | |

|---|---|---|

| labels | ||

| x | 1 | 34 |

| y | 2 | 56 |

| z | 3 | 76 |

| m | 4 | 87 |

| n | 5 | 9 |

d1.reset_index() # this will drop the existing index and make it column of the dataframe and set default index to dataframe| labels | a | b | |

|---|---|---|---|

| 0 | x | 1 | 34 |

| 1 | y | 2 | 56 |

| 2 | z | 3 | 76 |

| 3 | m | 4 | 87 |

| 4 | n | 5 | 9 |

18.0.5 groupby

wallet| Unnamed: 0 | date | category | description | debit | ones | |

|---|---|---|---|---|---|---|

| 0 | 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 | 1 |

| 1 | 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 | 1 |

| 2 | 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 | 1 |

| 3 | 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 | 1 |

| 4 | 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 | 1 |

| ... | ... | ... | ... | ... | ... | ... |

| 95 | 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 | 1 |

| 96 | 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 | 1 |

| 97 | 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 | 1 |

| 98 | 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 | 1 |

| 99 | 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 | 1 |

100 rows × 6 columns

w| date | category | description | debit | |

|---|---|---|---|---|

| 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 |

| 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 |

| 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 |

| 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 |

| 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 |

| ... | ... | ... | ... | ... |

| 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 |

| 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 |

| 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 |

| 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 |

| 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 |

100 rows × 4 columns

del wallet['Unnamed: 0']wallet| date | category | description | debit | ones | |

|---|---|---|---|---|---|

| 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 | 1 |

| 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 | 1 |

| 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 | 1 |

| 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 | 1 |

| 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 | 1 |

| ... | ... | ... | ... | ... | ... |

| 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 | 1 |

| 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 | 1 |

| 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 | 1 |

| 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 | 1 |

| 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 | 1 |

100 rows × 5 columns

wallet.groupby("category").sum(numeric_only=True)| debit | ones | |

|---|---|---|

| category | ||

| Books | 4929.750393 | 14 |

| Food | 8281.189173 | 27 |

| Music | 4233.451868 | 16 |

| Travel | 6052.931876 | 16 |

| Utility | 7562.267233 | 27 |

wallet.groupby("description").sum(numeric_only=True)| debit | ones | |

|---|---|---|

| description | ||

| Amazon | 2504.690567 | 9 |

| Amazon Kindle | 1389.052376 | 4 |

| Auto | 2210.428935 | 5 |

| Electricity | 2885.064355 | 12 |

| Flipcart | 2503.255216 | 7 |

| Hotel | 2752.174732 | 9 |

| Metro | 1216.463665 | 4 |

| Netflix | 1546.567562 | 6 |

| Phone | 4677.202878 | 15 |

| Swiggy | 1936.495366 | 8 |

| Taxi | 2626.039276 | 7 |

| Zomato | 3592.519075 | 10 |

| spotify | 1219.636541 | 4 |

wallet.groupby("description").max(numeric_only=True)| debit | ones | |

|---|---|---|

| description | ||

| Amazon | 498.100496 | 1 |

| Amazon Kindle | 497.770860 | 1 |

| Auto | 494.124399 | 1 |

| Electricity | 382.519510 | 1 |

| Flipcart | 494.128492 | 1 |

| Hotel | 483.315864 | 1 |

| Metro | 441.602143 | 1 |

| Netflix | 354.940241 | 1 |

| Phone | 499.858182 | 1 |

| Swiggy | 328.440080 | 1 |

| Taxi | 485.297743 | 1 |

| Zomato | 489.143483 | 1 |

| spotify | 415.372894 | 1 |

wallet.groupby("category").mean(numeric_only=True)| debit | ones | |

|---|---|---|

| category | ||

| Books | 352.125028 | 1.0 |

| Food | 306.710710 | 1.0 |

| Music | 264.590742 | 1.0 |

| Travel | 378.308242 | 1.0 |

| Utility | 280.083972 | 1.0 |

wallet.groupby("category").std(numeric_only=True)| debit | ones | |

|---|---|---|

| category | ||

| Books | 137.793128 | 0.0 |

| Food | 119.856277 | 0.0 |

| Music | 105.824988 | 0.0 |

| Travel | 96.400518 | 0.0 |

| Utility | 119.148546 | 0.0 |

wallet.groupby(['category', 'description']).sum(numeric_only=True)| debit | ones | ||

|---|---|---|---|

| category | description | ||

| Books | Amazon | 1037.442802 | 3 |

| Amazon Kindle | 1389.052376 | 4 | |

| Flipcart | 2503.255216 | 7 | |

| Food | Hotel | 2752.174732 | 9 |

| Swiggy | 1936.495366 | 8 | |

| Zomato | 3592.519075 | 10 | |

| Music | Amazon | 1467.247766 | 6 |

| Netflix | 1546.567562 | 6 | |

| spotify | 1219.636541 | 4 | |

| Travel | Auto | 2210.428935 | 5 |

| Metro | 1216.463665 | 4 | |

| Taxi | 2626.039276 | 7 | |

| Utility | Electricity | 2885.064355 | 12 |

| Phone | 4677.202878 | 15 |

stocks| value | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

| NIKE | 100.5 | 104.0 | 105.00 | 1000 |

18.0.6 One more example

weatherdata_url = "https://notes.pipal.in/2017/arcesium-oct-advpython/HYDERABAD-weather.csv"weather = pd.read_csv(weatherdata_url)weather| Unnamed: 0 | city | month | year | maxtemp | mintemp | rainfall | |

|---|---|---|---|---|---|---|---|

| 0 | 0 | HYDERABAD | January | 1951 | 29.0 | 14.8 | 0.0 |

| 1 | 1 | HYDERABAD | January | 1952 | 29.1 | 13.6 | 0.0 |

| 2 | 2 | HYDERABAD | January | 1953 | 28.6 | 14.6 | 3.5 |

| 3 | 3 | HYDERABAD | January | 1954 | 28.2 | 13.9 | 0.0 |

| 4 | 4 | HYDERABAD | January | 1955 | 28.0 | 14.7 | 0.0 |

| ... | ... | ... | ... | ... | ... | ... | ... |

| 594 | 594 | HYDERABAD | December | 1996 | 28.3 | 14.9 | 0.0 |

| 595 | 595 | HYDERABAD | December | 1997 | 28.7 | 19.2 | 40.6 |

| 596 | 596 | HYDERABAD | December | 1998 | 28.7 | 12.8 | 0.0 |

| 597 | 597 | HYDERABAD | December | 1999 | 29.0 | 14.2 | 0.0 |

| 598 | 598 | HYDERABAD | December | 2000 | 29.6 | 13.3 | 1.0 |

599 rows × 7 columns

weather.groupby("year").mean(numeric_only=True)| Unnamed: 0 | maxtemp | mintemp | rainfall | |

|---|---|---|---|---|

| year | ||||

| 1951 | 274.333333 | 32.666667 | 20.233333 | 58.975000 |

| 1952 | 275.333333 | 31.975000 | 19.891667 | 46.741667 |

| 1953 | 276.333333 | 32.183333 | 20.266667 | 74.245455 |

| 1954 | 277.333333 | 31.525000 | 19.875000 | 70.366667 |

| 1955 | 278.333333 | 30.883333 | 19.725000 | 92.775000 |

| 1956 | 279.333333 | 30.783333 | 19.791667 | 64.941667 |

| 1957 | 280.333333 | 31.533333 | 20.016667 | 66.783333 |

| 1958 | 281.333333 | 31.733333 | 20.475000 | 76.216667 |

| 1959 | 282.333333 | 31.900000 | 20.358333 | 64.825000 |

| 1960 | 283.333333 | 31.841667 | 20.416667 | 57.775000 |

| 1961 | 284.333333 | 31.258333 | 20.225000 | 68.400000 |

| 1962 | 296.636364 | 30.418182 | 19.490909 | 107.118182 |

| 1963 | 286.250000 | 31.133333 | 19.308333 | 69.125000 |

| 1964 | 287.250000 | 32.150000 | 19.658333 | 58.400000 |

| 1965 | 288.250000 | 32.600000 | 19.541667 | 67.441667 |

| 1966 | 289.250000 | 32.666667 | 20.550000 | 55.358333 |

| 1967 | 290.250000 | 32.625000 | 19.483333 | 69.383333 |

| 1968 | 291.250000 | 32.416667 | 19.200000 | 53.250000 |

| 1969 | 292.250000 | 32.575000 | 20.408333 | 53.100000 |

| 1970 | 293.250000 | 32.041667 | 19.825000 | 95.566667 |

| 1971 | 294.250000 | 31.975000 | 20.008333 | 55.433333 |

| 1972 | 295.250000 | 32.633333 | 21.008333 | 42.958333 |

| 1973 | 296.250000 | 32.425000 | 21.200000 | 73.183333 |

| 1974 | 297.250000 | 32.175000 | 20.016667 | 56.283333 |

| 1975 | 298.250000 | 31.266667 | 20.258333 | 115.291667 |

| 1976 | 299.250000 | 32.050000 | 20.441667 | 66.075000 |

| 1977 | 300.250000 | 32.258333 | 20.775000 | 45.300000 |

| 1978 | 301.250000 | 31.575000 | 21.108333 | 93.116667 |

| 1979 | 302.250000 | 32.400000 | 21.616667 | 58.650000 |

| 1980 | 303.250000 | 32.816667 | 21.425000 | 49.325000 |

| 1981 | 304.250000 | 32.108333 | 20.750000 | 82.750000 |

| 1982 | 305.250000 | 32.241667 | 21.133333 | 63.891667 |

| 1983 | 306.250000 | 32.541667 | 21.000000 | 110.025000 |

| 1984 | 307.250000 | 32.483333 | 21.033333 | 64.083333 |

| 1985 | 308.250000 | 32.875000 | 20.975000 | 31.116667 |

| 1986 | 309.250000 | 32.800000 | 21.441667 | 51.775000 |

| 1987 | 310.250000 | 32.433333 | 21.200000 | 80.250000 |

| 1988 | 311.250000 | 32.525000 | 21.400000 | 76.458333 |

| 1989 | 312.250000 | 32.325000 | 20.825000 | 83.883333 |

| 1990 | 313.250000 | 31.541667 | 20.991667 | 76.666667 |

| 1991 | 314.250000 | 32.450000 | 21.416667 | 64.200000 |

| 1992 | 315.250000 | 32.683333 | 20.650000 | 63.716667 |

| 1993 | 316.250000 | 32.733333 | 20.516667 | 60.458333 |

| 1994 | 317.250000 | 32.225000 | 20.516667 | 68.325000 |

| 1995 | 318.250000 | 32.183333 | 20.916667 | 101.991667 |

| 1996 | 319.250000 | 32.633333 | 20.958333 | 80.958333 |

| 1997 | 320.250000 | 32.616667 | 21.025000 | 63.750000 |

| 1998 | 321.250000 | 33.125000 | 21.683333 | 78.516667 |

| 1999 | 322.250000 | 32.608333 | 20.341667 | 47.008333 |

| 2000 | 323.250000 | 32.583333 | 20.391667 | 87.066667 |

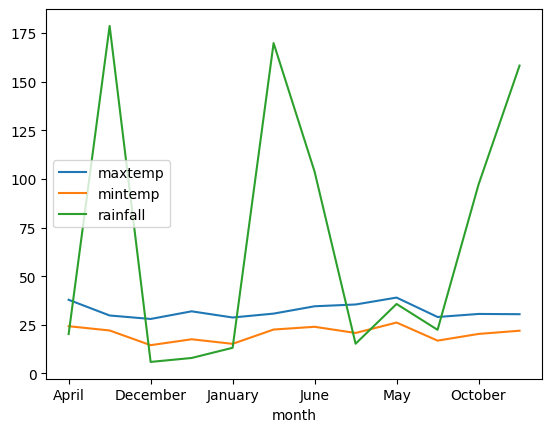

weather.groupby("month").mean(numeric_only=True)[['maxtemp','mintemp','rainfall']]| maxtemp | mintemp | rainfall | |

|---|---|---|---|

| month | |||

| April | 37.863265 | 24.273469 | 20.234694 |

| August | 29.786000 | 22.086000 | 178.690000 |

| December | 28.004000 | 14.526000 | 5.912000 |

| February | 31.932000 | 17.556000 | 7.940000 |

| January | 28.760000 | 15.214000 | 13.178000 |

| July | 30.754000 | 22.560000 | 169.860000 |

| June | 34.528000 | 23.976000 | 103.754000 |

| March | 35.444000 | 20.798000 | 15.264000 |

| May | 38.996000 | 26.160000 | 35.714000 |

| November | 29.016000 | 16.862000 | 22.420408 |

| October | 30.582000 | 20.306000 | 97.158000 |

| September | 30.452000 | 21.962000 | 158.292000 |

bymonth = weather.groupby("month").mean(numeric_only=True)[['maxtemp','mintemp','rainfall']]bymonth| maxtemp | mintemp | rainfall | |

|---|---|---|---|

| month | |||

| April | 37.863265 | 24.273469 | 20.234694 |

| August | 29.786000 | 22.086000 | 178.690000 |

| December | 28.004000 | 14.526000 | 5.912000 |

| February | 31.932000 | 17.556000 | 7.940000 |

| January | 28.760000 | 15.214000 | 13.178000 |

| July | 30.754000 | 22.560000 | 169.860000 |

| June | 34.528000 | 23.976000 | 103.754000 |

| March | 35.444000 | 20.798000 | 15.264000 |

| May | 38.996000 | 26.160000 | 35.714000 |

| November | 29.016000 | 16.862000 | 22.420408 |

| October | 30.582000 | 20.306000 | 97.158000 |

| September | 30.452000 | 21.962000 | 158.292000 |

bymonth.plot()--------------------------------------------------------------------------- ImportError Traceback (most recent call last) Cell In[175], line 1 ----> 1 bymonth.plot() File /opt/tljh/user/lib/python3.12/site-packages/pandas/plotting/_core.py:947, in PlotAccessor.__call__(self, *args, **kwargs) 946 def __call__(self, *args, **kwargs): --> 947 plot_backend = _get_plot_backend(kwargs.pop("backend", None)) 949 x, y, kind, kwargs = self._get_call_args( 950 plot_backend.__name__, self._parent, args, kwargs 951 ) 953 kind = self._kind_aliases.get(kind, kind) File /opt/tljh/user/lib/python3.12/site-packages/pandas/plotting/_core.py:1944, in _get_plot_backend(backend) 1941 if backend_str in _backends: 1942 return _backends[backend_str] -> 1944 module = _load_backend(backend_str) 1945 _backends[backend_str] = module 1946 return module File /opt/tljh/user/lib/python3.12/site-packages/pandas/plotting/_core.py:1874, in _load_backend(backend) 1872 module = importlib.import_module("pandas.plotting._matplotlib") 1873 except ImportError: -> 1874 raise ImportError( 1875 "matplotlib is required for plotting when the " 1876 'default backend "matplotlib" is selected.' 1877 ) from None 1878 return module 1880 found_backend = False ImportError: matplotlib is required for plotting when the default backend "matplotlib" is selected.

!pip install matplotlibDefaulting to user installation because normal site-packages is not writeable Collecting matplotlib Downloading matplotlib-3.10.8-cp312-cp312-manylinux2014_x86_64.manylinux_2_17_x86_64.whl.metadata (52 kB) Collecting contourpy>=1.0.1 (from matplotlib) Downloading contourpy-1.3.3-cp312-cp312-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl.metadata (5.5 kB) Collecting cycler>=0.10 (from matplotlib) Downloading cycler-0.12.1-py3-none-any.whl.metadata (3.8 kB) Collecting fonttools>=4.22.0 (from matplotlib) Downloading fonttools-4.61.1-cp312-cp312-manylinux1_x86_64.manylinux2014_x86_64.manylinux_2_17_x86_64.manylinux_2_5_x86_64.whl.metadata (114 kB) Collecting kiwisolver>=1.3.1 (from matplotlib) Downloading kiwisolver-1.4.9-cp312-cp312-manylinux2014_x86_64.manylinux_2_17_x86_64.whl.metadata (6.3 kB) Requirement already satisfied: numpy>=1.23 in /opt/tljh/user/lib/python3.12/site-packages (from matplotlib) (2.3.5) Requirement already satisfied: packaging>=20.0 in /opt/tljh/user/lib/python3.12/site-packages (from matplotlib) (24.1) Collecting pillow>=8 (from matplotlib) Downloading pillow-12.1.1-cp312-cp312-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl.metadata (8.8 kB) Collecting pyparsing>=3 (from matplotlib) Downloading pyparsing-3.3.2-py3-none-any.whl.metadata (5.8 kB) Requirement already satisfied: python-dateutil>=2.7 in /opt/tljh/user/lib/python3.12/site-packages (from matplotlib) (2.9.0.post0) Requirement already satisfied: six>=1.5 in /opt/tljh/user/lib/python3.12/site-packages (from python-dateutil>=2.7->matplotlib) (1.17.0) Downloading matplotlib-3.10.8-cp312-cp312-manylinux2014_x86_64.manylinux_2_17_x86_64.whl (8.7 MB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 8.7/8.7 MB 65.9 MB/s eta 0:00:00 Downloading contourpy-1.3.3-cp312-cp312-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl (362 kB) Downloading cycler-0.12.1-py3-none-any.whl (8.3 kB) Downloading fonttools-4.61.1-cp312-cp312-manylinux1_x86_64.manylinux2014_x86_64.manylinux_2_17_x86_64.manylinux_2_5_x86_64.whl (5.0 MB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 5.0/5.0 MB 119.3 MB/s eta 0:00:00 Downloading kiwisolver-1.4.9-cp312-cp312-manylinux2014_x86_64.manylinux_2_17_x86_64.whl (1.5 MB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.5/1.5 MB 148.2 MB/s eta 0:00:00 Downloading pillow-12.1.1-cp312-cp312-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl (7.0 MB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 7.0/7.0 MB 104.6 MB/s eta 0:00:00 Downloading pyparsing-3.3.2-py3-none-any.whl (122 kB) Installing collected packages: pyparsing, pillow, kiwisolver, fonttools, cycler, contourpy, matplotlib WARNING: The scripts fonttools, pyftmerge, pyftsubset and ttx are installed in '/home/jupyter-vikrant/.local/bin' which is not on PATH. Consider adding this directory to PATH or, if you prefer to suppress this warning, use --no-warn-script-location. Successfully installed contourpy-1.3.3 cycler-0.12.1 fonttools-4.61.1 kiwisolver-1.4.9 matplotlib-3.10.8 pillow-12.1.1 pyparsing-3.3.2

%matplotlib inlinebymonth.plot()

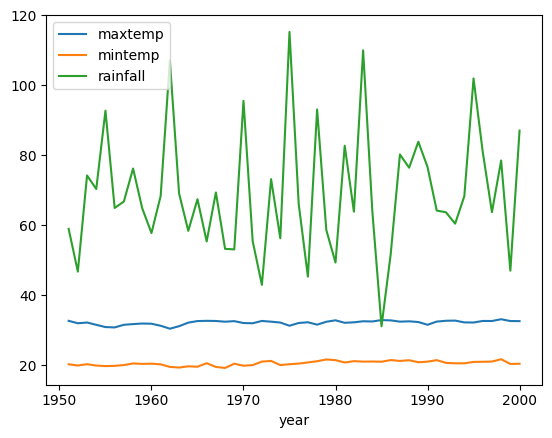

byyear = weather.groupby("year").mean(numeric_only=True)del byyear['Unnamed: 0']byyear| maxtemp | mintemp | rainfall | |

|---|---|---|---|

| year | |||

| 1951 | 32.666667 | 20.233333 | 58.975000 |

| 1952 | 31.975000 | 19.891667 | 46.741667 |

| 1953 | 32.183333 | 20.266667 | 74.245455 |

| 1954 | 31.525000 | 19.875000 | 70.366667 |

| 1955 | 30.883333 | 19.725000 | 92.775000 |

| 1956 | 30.783333 | 19.791667 | 64.941667 |

| 1957 | 31.533333 | 20.016667 | 66.783333 |

| 1958 | 31.733333 | 20.475000 | 76.216667 |

| 1959 | 31.900000 | 20.358333 | 64.825000 |

| 1960 | 31.841667 | 20.416667 | 57.775000 |

| 1961 | 31.258333 | 20.225000 | 68.400000 |

| 1962 | 30.418182 | 19.490909 | 107.118182 |

| 1963 | 31.133333 | 19.308333 | 69.125000 |

| 1964 | 32.150000 | 19.658333 | 58.400000 |

| 1965 | 32.600000 | 19.541667 | 67.441667 |

| 1966 | 32.666667 | 20.550000 | 55.358333 |

| 1967 | 32.625000 | 19.483333 | 69.383333 |

| 1968 | 32.416667 | 19.200000 | 53.250000 |

| 1969 | 32.575000 | 20.408333 | 53.100000 |

| 1970 | 32.041667 | 19.825000 | 95.566667 |

| 1971 | 31.975000 | 20.008333 | 55.433333 |

| 1972 | 32.633333 | 21.008333 | 42.958333 |

| 1973 | 32.425000 | 21.200000 | 73.183333 |

| 1974 | 32.175000 | 20.016667 | 56.283333 |

| 1975 | 31.266667 | 20.258333 | 115.291667 |

| 1976 | 32.050000 | 20.441667 | 66.075000 |

| 1977 | 32.258333 | 20.775000 | 45.300000 |

| 1978 | 31.575000 | 21.108333 | 93.116667 |

| 1979 | 32.400000 | 21.616667 | 58.650000 |

| 1980 | 32.816667 | 21.425000 | 49.325000 |

| 1981 | 32.108333 | 20.750000 | 82.750000 |

| 1982 | 32.241667 | 21.133333 | 63.891667 |

| 1983 | 32.541667 | 21.000000 | 110.025000 |

| 1984 | 32.483333 | 21.033333 | 64.083333 |

| 1985 | 32.875000 | 20.975000 | 31.116667 |

| 1986 | 32.800000 | 21.441667 | 51.775000 |

| 1987 | 32.433333 | 21.200000 | 80.250000 |

| 1988 | 32.525000 | 21.400000 | 76.458333 |

| 1989 | 32.325000 | 20.825000 | 83.883333 |

| 1990 | 31.541667 | 20.991667 | 76.666667 |

| 1991 | 32.450000 | 21.416667 | 64.200000 |

| 1992 | 32.683333 | 20.650000 | 63.716667 |

| 1993 | 32.733333 | 20.516667 | 60.458333 |

| 1994 | 32.225000 | 20.516667 | 68.325000 |

| 1995 | 32.183333 | 20.916667 | 101.991667 |

| 1996 | 32.633333 | 20.958333 | 80.958333 |

| 1997 | 32.616667 | 21.025000 | 63.750000 |

| 1998 | 33.125000 | 21.683333 | 78.516667 |

| 1999 | 32.608333 | 20.341667 | 47.008333 |

| 2000 | 32.583333 | 20.391667 | 87.066667 |

byyear.plot()

18.0.7 filtering

stocks| value | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

| IBM | 125.7 | 123.0 | 127.30 | 50 |

| NIKE | 100.5 | 104.0 | 105.00 | 1000 |

stocks.value > 200 # any camparison of series will result into a series of booleansAPPLE True

AT&T True

IBM False

NIKE False

Name: value, dtype: boolstocks[stocks.value > 200]| value | low | high | volume | |

|---|---|---|---|---|

| APPLE | 234.5 | 233.0 | 240.32 | 100 |

| AT&T | 221.6 | 220.0 | 222.50 | 200 |

wallet| date | category | description | debit | ones | |

|---|---|---|---|---|---|

| 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 | 1 |

| 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 | 1 |

| 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 | 1 |

| 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 | 1 |

| 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 | 1 |

| ... | ... | ... | ... | ... | ... |

| 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 | 1 |

| 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 | 1 |

| 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 | 1 |

| 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 | 1 |

| 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 | 1 |

100 rows × 5 columns

wallet[wallet.debit < 400]| date | category | description | debit | ones | |

|---|---|---|---|---|---|

| 1 | 2020-10-08 09:53:28.377359 | Food | Swiggy | 328.440080 | 1 |

| 2 | 2021-02-23 09:53:28.377359 | Books | Amazon | 244.679437 | 1 |

| 3 | 2020-11-01 14:53:28.377359 | Utility | Phone | 222.756318 | 1 |

| 5 | 2021-07-28 19:53:28.377359 | Utility | Electricity | 219.941711 | 1 |

| 6 | 2021-04-16 11:53:28.377359 | Books | Amazon Kindle | 270.322595 | 1 |

| ... | ... | ... | ... | ... | ... |

| 92 | 2021-01-12 19:53:28.377359 | Travel | Taxi | 356.842638 | 1 |

| 93 | 2021-01-11 09:53:28.377359 | Utility | Electricity | 111.720809 | 1 |

| 95 | 2021-07-19 13:53:28.377359 | Utility | Phone | 388.671213 | 1 |

| 97 | 2021-03-25 11:53:28.377359 | Utility | Phone | 320.789434 | 1 |

| 99 | 2020-10-11 16:53:28.377359 | Food | Hotel | 100.455501 | 1 |

70 rows × 5 columns

wallet[wallet.debit > 400]| date | category | description | debit | ones | |

|---|---|---|---|---|---|

| 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 | 1 |

| 4 | 2021-06-05 13:53:28.377359 | Books | Flipcart | 494.128492 | 1 |

| 7 | 2021-02-15 10:53:28.377359 | Food | Zomato | 457.183104 | 1 |

| 9 | 2020-11-29 14:53:28.377359 | Travel | Auto | 443.618884 | 1 |

| 11 | 2021-07-24 13:53:28.377359 | Food | Zomato | 434.495468 | 1 |

| 14 | 2021-06-09 13:53:28.377359 | Travel | Taxi | 485.297743 | 1 |

| 18 | 2021-05-08 15:53:28.377359 | Travel | Auto | 433.822404 | 1 |

| 22 | 2021-06-24 15:53:28.377359 | Food | Zomato | 489.143483 | 1 |

| 24 | 2021-05-31 11:53:28.377359 | Books | Amazon | 498.100496 | 1 |

| 25 | 2021-05-21 14:53:28.377359 | Food | Hotel | 483.315864 | 1 |

| 33 | 2021-05-14 18:53:28.377359 | Food | Hotel | 449.248030 | 1 |

| 34 | 2021-05-14 10:53:28.377359 | Utility | Phone | 499.858182 | 1 |

| 35 | 2021-02-18 18:53:28.377359 | Travel | Metro | 441.602143 | 1 |

| 36 | 2020-12-10 10:53:28.377359 | Travel | Auto | 472.941439 | 1 |

| 38 | 2021-08-15 10:53:28.377359 | Travel | Auto | 494.124399 | 1 |

| 41 | 2021-02-20 19:53:28.377359 | Utility | Phone | 425.187191 | 1 |

| 43 | 2020-09-21 12:53:28.377359 | Utility | Phone | 486.033933 | 1 |

| 50 | 2020-12-24 11:53:28.377359 | Food | Zomato | 463.001875 | 1 |

| 52 | 2021-03-26 09:53:28.377359 | Travel | Taxi | 403.610070 | 1 |

| 55 | 2021-01-21 19:53:28.377359 | Books | Flipcart | 423.749708 | 1 |

| 62 | 2021-01-27 19:53:28.377359 | Books | Amazon Kindle | 497.770860 | 1 |

| 75 | 2021-03-03 12:53:28.377359 | Food | Hotel | 425.625291 | 1 |

| 77 | 2021-01-18 14:53:28.377359 | Books | Amazon Kindle | 482.152343 | 1 |

| 78 | 2020-09-09 16:53:28.377359 | Music | spotify | 415.372894 | 1 |

| 81 | 2020-10-29 16:53:28.377359 | Food | Hotel | 470.080995 | 1 |

| 82 | 2020-09-22 09:53:28.377359 | Music | spotify | 411.142701 | 1 |

| 83 | 2021-03-18 09:53:28.377359 | Books | Flipcart | 451.584407 | 1 |

| 94 | 2021-01-04 13:53:28.377359 | Utility | Phone | 431.185537 | 1 |

| 96 | 2021-01-12 19:53:28.377359 | Books | Flipcart | 467.554562 | 1 |

| 98 | 2021-05-13 15:53:28.377359 | Travel | Taxi | 442.096469 | 1 |

wallet[wallet.category=="Music"]| date | category | description | debit | ones | |

|---|---|---|---|---|---|

| 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 | 1 |

| 12 | 2021-07-24 14:53:28.377359 | Music | Amazon | 329.536003 | 1 |

| 23 | 2020-12-11 10:53:28.377359 | Music | Netflix | 354.940241 | 1 |

| 37 | 2021-04-18 16:53:28.377359 | Music | Amazon | 266.069078 | 1 |

| 49 | 2021-03-16 09:53:28.377359 | Music | spotify | 232.303402 | 1 |

| 54 | 2020-11-16 10:53:28.377359 | Music | spotify | 160.817543 | 1 |

| 65 | 2020-10-23 18:53:28.377359 | Music | Netflix | 188.748743 | 1 |

| 67 | 2021-07-31 14:53:28.377359 | Music | Netflix | 324.786917 | 1 |

| 76 | 2020-11-17 09:53:28.377359 | Music | Netflix | 197.534600 | 1 |

| 78 | 2020-09-09 16:53:28.377359 | Music | spotify | 415.372894 | 1 |

| 79 | 2021-08-17 09:53:28.377359 | Music | Netflix | 321.763416 | 1 |

| 82 | 2020-09-22 09:53:28.377359 | Music | spotify | 411.142701 | 1 |

| 84 | 2020-09-21 10:53:28.377359 | Music | Netflix | 158.793646 | 1 |

| 85 | 2021-01-12 09:53:28.377359 | Music | Amazon | 130.374908 | 1 |

| 89 | 2021-04-12 14:53:28.377359 | Music | Amazon | 218.487173 | 1 |

| 90 | 2020-12-01 14:53:28.377359 | Music | Amazon | 101.573276 | 1 |

music = wallet[wallet.category=="Music"]music| date | category | description | debit | ones | |

|---|---|---|---|---|---|

| 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 | 1 |

| 12 | 2021-07-24 14:53:28.377359 | Music | Amazon | 329.536003 | 1 |

| 23 | 2020-12-11 10:53:28.377359 | Music | Netflix | 354.940241 | 1 |

| 37 | 2021-04-18 16:53:28.377359 | Music | Amazon | 266.069078 | 1 |

| 49 | 2021-03-16 09:53:28.377359 | Music | spotify | 232.303402 | 1 |

| 54 | 2020-11-16 10:53:28.377359 | Music | spotify | 160.817543 | 1 |

| 65 | 2020-10-23 18:53:28.377359 | Music | Netflix | 188.748743 | 1 |

| 67 | 2021-07-31 14:53:28.377359 | Music | Netflix | 324.786917 | 1 |

| 76 | 2020-11-17 09:53:28.377359 | Music | Netflix | 197.534600 | 1 |

| 78 | 2020-09-09 16:53:28.377359 | Music | spotify | 415.372894 | 1 |

| 79 | 2021-08-17 09:53:28.377359 | Music | Netflix | 321.763416 | 1 |

| 82 | 2020-09-22 09:53:28.377359 | Music | spotify | 411.142701 | 1 |

| 84 | 2020-09-21 10:53:28.377359 | Music | Netflix | 158.793646 | 1 |

| 85 | 2021-01-12 09:53:28.377359 | Music | Amazon | 130.374908 | 1 |

| 89 | 2021-04-12 14:53:28.377359 | Music | Amazon | 218.487173 | 1 |

| 90 | 2020-12-01 14:53:28.377359 | Music | Amazon | 101.573276 | 1 |

weather[weather.year==1960]| Unnamed: 0 | city | month | year | maxtemp | mintemp | rainfall | |

|---|---|---|---|---|---|---|---|

| 9 | 9 | HYDERABAD | January | 1960 | 28.4 | 17.0 | 0.0 |

| 59 | 59 | HYDERABAD | February | 1960 | 31.6 | 15.4 | 0.0 |

| 109 | 109 | HYDERABAD | March | 1960 | 33.4 | 20.7 | 29.5 |

| 159 | 159 | HYDERABAD | April | 1960 | 37.1 | 23.2 | 3.5 |

| 208 | 208 | HYDERABAD | May | 1960 | 38.2 | 25.6 | 41.9 |

| 258 | 258 | HYDERABAD | June | 1960 | 33.4 | 23.8 | 72.2 |

| 308 | 308 | HYDERABAD | July | 1960 | 30.9 | 23.3 | 95.4 |

| 358 | 358 | HYDERABAD | August | 1960 | 31.4 | 22.8 | 26.1 |

| 408 | 408 | HYDERABAD | September | 1960 | 30.1 | 22.3 | 361.7 |

| 458 | 458 | HYDERABAD | October | 1960 | 30.5 | 19.4 | 13.9 |

| 508 | 508 | HYDERABAD | November | 1960 | 28.7 | 16.7 | 40.0 |

| 558 | 558 | HYDERABAD | December | 1960 | 28.4 | 14.8 | 9.1 |

music = wallet[ (wallet.category=="Music") & (wallet.description=="Amazon")]music| date | category | description | debit | ones | |

|---|---|---|---|---|---|

| 0 | 2021-03-07 14:53:28.377359 | Music | Amazon | 421.207327 | 1 |

| 12 | 2021-07-24 14:53:28.377359 | Music | Amazon | 329.536003 | 1 |

| 37 | 2021-04-18 16:53:28.377359 | Music | Amazon | 266.069078 | 1 |

| 85 | 2021-01-12 09:53:28.377359 | Music | Amazon | 130.374908 | 1 |

| 89 | 2021-04-12 14:53:28.377359 | Music | Amazon | 218.487173 | 1 |

| 90 | 2020-12-01 14:53:28.377359 | Music | Amazon | 101.573276 | 1 |

18.0.8 Writeing dataframe

df.to_csv("df.csv")!cat df.csv,symbol,price,change,volume

0,XFNBI,154.27522023650917,2.871245637237712,3926

1,ORGHT,117.9767021797848,4.539536223184663,586

2,VEBHV,113.3520799776703,0.6635982458596046,3688

3,BYRIK,104.2138293580797,2.626015334200588,1767

4,VCRRD,155.43160467760868,0.4681719455962513,641

5,PEQVY,181.21666821143089,0.4272971174931816,2738

6,LQTQZ,168.33573338890363,3.521911607612079,2670

7,HPDAK,155.96008397206708,1.7239221076463696,4259

8,XLHUI,174.46964754068694,3.745170925696039,2770

9,JEAZX,148.7162928469953,2.6624961725339205,1614

10,QOZAM,192.1176069819026,4.139241768741275,726

11,XJAHU,150.20434996488757,3.1065263183864253,2688

12,VLXDC,178.73486584779386,3.867853214120204,4103

13,PJQEK,199.0461185921472,3.2096859326164178,1592

14,DWJDV,148.7534049268761,3.331681968931371,2177

15,ORJMD,174.11752806601532,3.180221402115432,909

16,DLJGR,117.33043838185898,0.3468621177713465,4092

17,YCHHM,144.8746810221045,3.3530551951924155,1571

18,WFNNB,147.65332106681385,3.7760281088943017,3195

19,YNRZG,128.86957732022677,4.569918613496244,2713Writing in excel

with pd.ExcelWriter("dataframe.xlsx") as writer:

df.to_excel(writer, sheet_name="dataframe")18.0.9 Documentation

https://pandas.pydata.org/docs/getting_started/index.html#getting-started

import osos.re